|

Īnother origin of byte for bit groups smaller than a computer's word size, and in particular groups of four bits, is on record by Louis G. It is a deliberate respelling of bite to avoid accidental mutation to bit. The term byte was coined by Werner Buchholz in June 1956, during the early design phase for the IBM Stretch computer, which had addressing to the bit and variable field length (VFL) instructions with a byte size encoded in the instruction. Internationally, the unit octet, symbol o, explicitly defines a sequence of eight bits, eliminating the potential ambiguity of the term "byte". The unit symbol for the byte was designated as the upper-case letter B by the International Electrotechnical Commission (IEC) and Institute of Electrical and Electronics Engineers (IEEE).

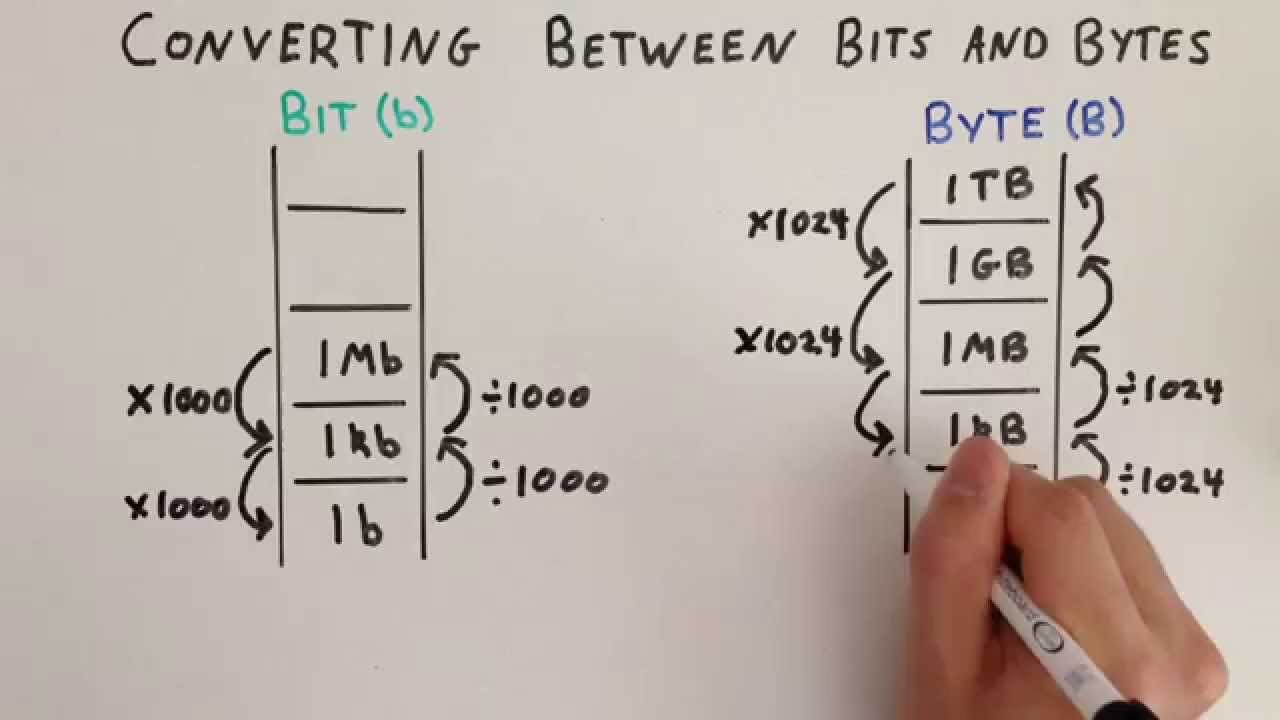

Modern architectures typically use 32- or 64-bit words, built of four or eight bytes, respectively. The popularity of major commercial computing architectures has aided in the ubiquitous acceptance of the 8-bit byte. Many types of applications use information representable in eight or fewer bits and processor designers commonly optimize for this usage. The international standard IEC 80000-13 codified this common meaning. The modern de facto standard of eight bits, as documented in ISO/IEC 2382-1:1993, is a convenient power of two permitting the binary-encoded values 0 through 255 for one byte, as 2 to the power of 8 is 256. In this era, bit groupings in the instruction stream were often referred to as syllables or slab, before the term byte became common. These systems often had memory words of 12, 18, 24, 30, 36, 48, or 60 bits, corresponding to 2, 3, 4, 5, 6, 8, or 10 six-bit bytes. The six-bit character code was an often-used implementation in early encoding systems, and computers using six-bit and nine-bit bytes were common in the 1960s. The size of the byte has historically been hardware-dependent and no definitive standards existed that mandated the size.

The first bit is number 0, making the eighth bit number 7. Those bits in an octet are usually counted with numbering from 0 to 7 or 7 to 0 depending on the bit endianness.

To disambiguate arbitrarily sized bytes from the common 8-bit definition, network protocol documents such as the Internet Protocol ( RFC 791) refer to an 8-bit byte as an octet. Historically, the byte was the number of bits used to encode a single character of text in a computer and for this reason it is the smallest addressable unit of memory in many computer architectures. The byte is a unit of digital information that most commonly consists of eight bits. For other uses, see Byte (disambiguation). This article is about the unit of information.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed